Deepfake attack: 'Many people could have been cheated'

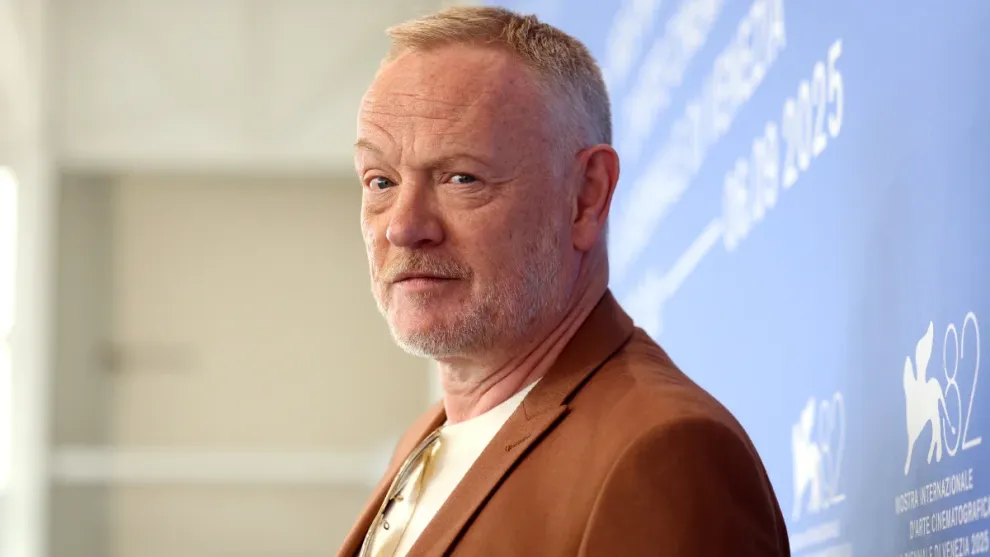

The article describes a real case of a deepfake investment scam in which AI generated video was used to impersonate a trusted public figure and mislead viewers into making financial decisions. The fake video appeared convincing and was distributed online, where it could easily reach a large audience.

The impersonation created the illusion that the individual was giving legitimate financial advice, encouraging people to invest or trade based on false information. Because the content looked authentic, many viewers could have been deceived into losing money.

The case highlights how accessible deepfake technology has become. With minimal effort, attackers can clone faces and voices to create highly realistic content that spreads quickly on social media and other platforms.

A key issue is that many people cannot reliably distinguish between real and AI generated media. This makes deepfake scams particularly effective, especially when they exploit trust in recognizable figures or authority.

The article emphasizes that these attacks are not isolated incidents but part of a growing trend in AI driven fraud. Deepfakes are increasingly used for financial scams, misinformation, and manipulation, often at large scale.

Overall, the story warns that deepfake attacks can potentially affect a wide number of people, especially as the technology becomes more convincing and widely available, making awareness and verification critical.