The Deepfake Nudes Crisis in Schools Is Much Worse Than You Thought

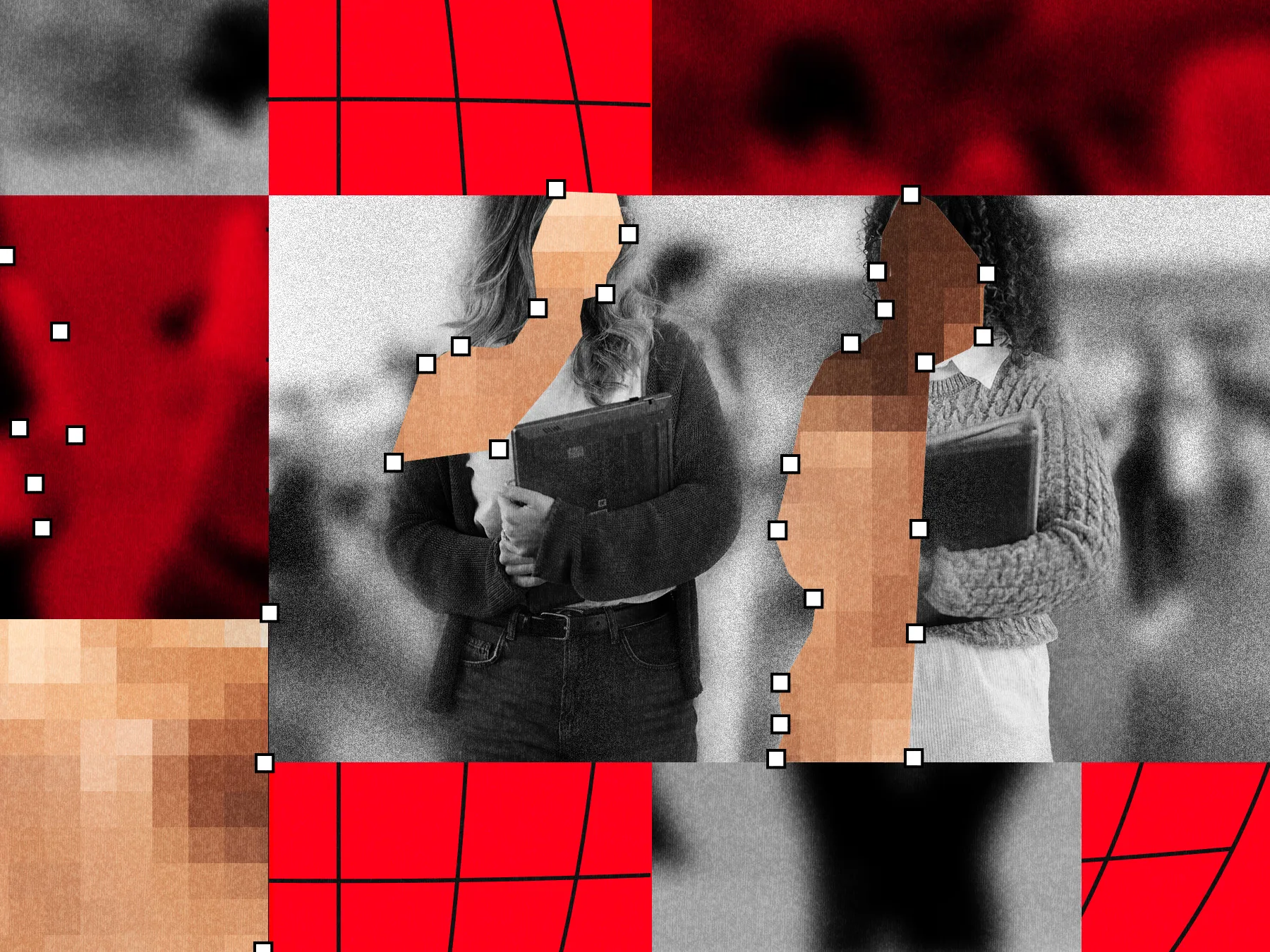

WIRED reports that AI generated deepfake nude images are becoming a global crisis in schools. A joint analysis by WIRED and Indicator found publicly reported incidents at nearly 90 schools, affecting more than 600 students across at least 28 countries since 2023. Most cases involve teenage boys using “nudify” apps to create fake sexual images of female classmates from ordinary social media photos.

The article says the real scale is probably much larger because many cases are never reported publicly or are handled privately by schools and police. Other research cited by WIRED suggests the problem is widespread: UNICEF estimated 1.2 million children had sexual deepfakes created of them last year, while Thorn found that one in eight teens knows someone who has been targeted.

Victims often experience severe distress, humiliation and fear that the images will spread online permanently. Some students avoid school, while families say schools and police are frequently unprepared or too slow to respond. In many cases, the images qualify as child sexual abuse material because they depict minors in explicit fake content.

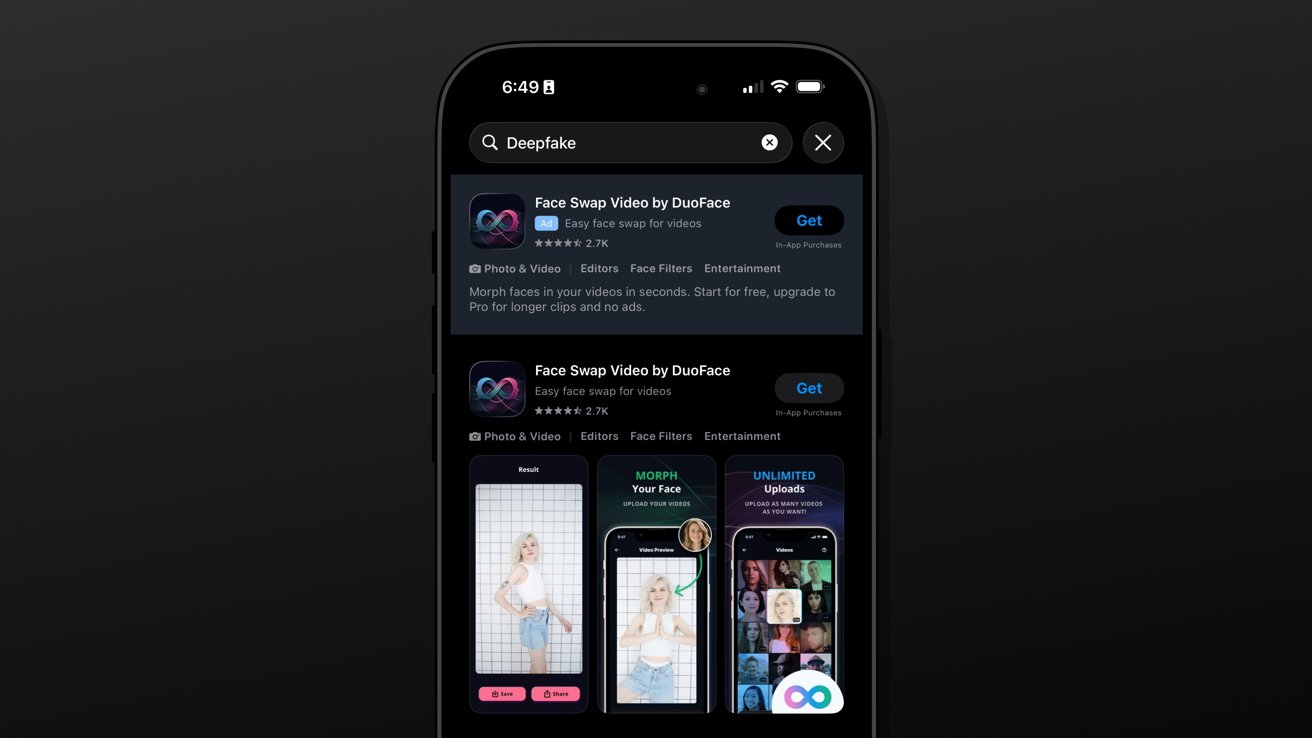

The article explains that AI has made the abuse easier, faster and more scalable. Nudification apps, bots and websites now allow users with little technical skill to create convincing sexualized images in a few clicks. Experts say the motivation is not always sexual; it can also involve humiliation, revenge, social control or peer pressure.

Some schools have started changing how they publish student images, including limiting yearbook photos or avoiding public social media posts that show students clearly. Legal and policy responses are also growing, including the Take It Down Act in the U.S., proposed bans on nudification apps in the UK and EU, and enforcement action in Australia. Still, WIRED concludes that schools remain underprepared for both sexual deepfakes and other forms of AI generated abuse targeting students and teachers.